If you are preparing for FRM in 2026, you are not entering the same risk management industry that existed five years ago. AI tools now handle parts of the process that used to demand hours of manual work. Most large institutions have already moved to some version of this hybrid model. The ones that have not are actively getting there.

If you are preparing for FRM in 2026, you are not entering the same risk management industry that existed five years ago. AI tools now handle parts of the process that used to demand hours of manual work. Most large institutions have already moved to some version of this hybrid model. The ones that have not are actively getting there.

AI is taking over parts of risk management. But it is not taking responsibility. Hybrid workflows do not reduce the need for trained professionals. They raise the bar. Someone still needs to design, validate, interpret, and take accountability when models get it wrong.

That's the job of an FRM.

Key Takeaways

- AI is reshaping risk management workflows, but the shift is about augmentation, not replacement.

- The tasks being automated are transactional. The roles being created require judgment, governance, and accountability.

- FRM remains relevant because its core strengths map directly to what AI cannot own: model validation, ethical oversight, and regulatory responsibility.

- GARP's 2026 syllabus reflects this shift. AI model risk, explainability, and governance frameworks are now central to Part II.

- The most valuable FRM professionals in 2026 will be those who combine quantitative rigour with practical AI fluency.

- Regulations including BCBS 350, ISO 23894, and SEBI's AI disclosure norms all point in the same direction: human accountability for AI-driven risk decisions is a compliance requirement, not a best practice.

- The career edge is shifting from running risk models to knowing when to trust them, when to challenge them, and how to govern them responsibly.

This is where the conversation around AI and the FRM credential gets interesting. It is no longer a question of whether AI will change risk management. It already has. What matters now is how it evolves the role of existing professionals, and which skills carry the most weight when AI is handling more of the execution.

AI in Risk Teams: What the Work Actually Looks Like

Most risk teams using AI today are not running a single, centralized system. Instead, they rely on different tools for different problems, and the extent to which these tools are integrated varies from one institution to another.

The way AI in risk management is often discussed in headlines can feel broad and all-encompassing. In practice, it is far more focused. Teams tend to use AI in specific parts of the workflow where it adds clear value.

And when you look across enough teams, a pattern starts to emerge: the same set of use cases appears again and again.

Data screening and preprocessing

Risk models run on data. Earlier, cleaning that data, flagging anomalies, and checking for gaps or inconsistencies was largely manual work handled by analysts.

Today, much of that process is automated. AI tools can screen data, highlight issues, and reduce the time between raw input and usable output. As a result, the analyst's role has shifted from doing the screening to reviewing and validating what the system flags.

Model monitoring and anomaly detection

Once a model is deployed, it needs to be watched closely. AI systems can monitor model outputs in real time, flag when a model's behaviour deviates from expected patterns, and alert teams before a small drift becomes a larger problem. This is particularly relevant in credit and market risk, where model performance can shift quickly with changing conditions.

Scenario generation and stress testing

Traditionally, stress testing meant running a fixed set of predefined scenarios. AI tools can now generate a wider range of scenarios, identify non-obvious risk combinations, and stress-test portfolios against conditions that historical data alone would not surface.

Early-stage credit and counterparty assessment

In credit risk, AI is being used to process large volumes of borrower or counterparty data faster than traditional methods allow. It surfaces patterns and signals that inform, though do not replace, the analyst's final assessment.

FRM Expertise Still Leads Here

Not everything has moved. Several parts of the risk workflow remain firmly in human hands, and for good reason.

Model validation requires a trained professional to assess whether a model is doing what it claims to do, whether its assumptions hold, and whether its outputs can be trusted in the context it is being used. This is not a task that can be delegated to another model.

Regulatory reporting and accountability require a named individual or function to stand behind the numbers. Regulators do not accept model outputs as self-explanatory. Someone with the training to interpret, defend, and if necessary, challenge those outputs needs to be in the room.

Ethical and governance decisions sit entirely outside what AI can own. Deciding how much risk appetite is appropriate, how to handle model uncertainty in a credit decision that affects a real borrower, or when to override a model's recommendation are judgment calls that require context, accountability, and professional responsibility.

Info:

Interested in pursuing FRM? Begin your journey with FRM Part 1 today.

What the FRM Curriculum Says About AI

GARP has not ignored the shift. The 2026 FRM syllabus reflects a deliberate move toward AI and model risk as core competencies, particularly in Part II. The update reflects where risk management is heading, and the curriculum has been built to match.

Here is how the key AI-related topics map across the FRM syllabus:

| FRM Part | Topic Area | What It Covers |

|---|---|---|

| Part I | Quantitative Methods | Statistical foundations that underpin AI model design |

| Part II | Model Risk Management | Validation, limitations, and governance of AI models |

| Part II | Market Risk | AI-assisted scenario generation and stress testing |

| Part II | Operational Risk | AI-driven anomaly detection and process risk |

| Part II | Current Issues | Explainability frameworks, BCBS 350, AI governance norms |

Info:

Check out the latest FRM 2026 Syllabus Changes to know more about what is covered in the curriculum.

Why the FRM Remains Relevant

The case for the FRM in an AI-driven environment is structural. AI systems in risk management need to be built on sound risk frameworks, validated against rigorous standards, and governed by professionals who understand both the models and the regulatory context they operate in. Advanced level FRM provides exactly that foundation.

Credit risk, market risk, operational risk, and model risk are not replaced by AI. They are the framework within which AI operates. AI does not rewrite the categories of risk. It works within them. That is why deep knowledge of these areas remains a stronger foundation than technical fluency without it. A professional who understands that framework is better placed to deploy, challenge, and govern AI systems than one who understands the technology alone.

The Skills Gap AI Has Created

The growth of AI in risk management has created a specific kind of demand. Institutions need professionals who can:

- Validate AI models against regulatory and internal standards

- Interpret model outputs in a credit, market, or operational risk context

- Apply explainability tools like SHAP and LIME to make model decisions defensible

- Identify model drift and know when to escalate or override

- Take accountability for risk decisions made with AI support

This is not a role for a BTech graduate who has learned risk on the side. It is a risk role that requires technology literacy, and the FRM is the credential that builds that foundation. The AI fluency strengthens it.

FRM Careers in an AI-Driven Market

The demand for risk professionals with AI fluency is not a future projection. Hiring patterns across banks, asset managers, and fintech firms in 2025 and 2026 already reflect it. The roles being created sit at the intersection of risk expertise and technical capability, and they pay significantly more than traditional risk analyst positions.

The career path for an FRM professional in this environment tends to follow a clear progression:

| Role | Experience Level | Typical Salary (India) | Core Requirement |

|---|---|---|---|

| AI Risk Analyst | 0 to 3 years | Rs 12 to 20L | FRM + Python basics |

| Model Risk Analyst | 2 to 5 years | Rs 18 to 30L | FRM + model validation |

| Model Risk Manager | 5 to 8 years | Rs 30 to 50L | FRM + AI governance |

| Head of AI Risk / CRO Track | 8+ years | Rs 50L+ | FRM + leadership + regulatory depth |

Info:

Learn more about FRM job scope in India for more insights.

Skills That Command a Premium

The premium for combining FRM expertise with AI fluency is significant. Most institutions are paying 25 to 40 percent more for professionals who bring both. The differentiating skills are consistent across job postings:

- Python and SQL for data analysis and model interrogation

- ML model validation techniques

- Explainability tools such as SHAP and LIME

- Working knowledge of BCBS 350 and SR 11-7 model risk guidelines

- Experience with stress testing and scenario analysis using AI-assisted tools

The FRM gets you into the room. These skills determine where you sit in it.

Traditional Risk Management vs AI: A Practical Comparison

AI has not replaced traditional risk management. It has changed the performance profile of certain tasks within it. The difference is worth understanding concretely.

| Metric | Traditional Risk Management | AI-Assisted Risk Management | Why It Matters |

|---|---|---|---|

| Processing Speed | 24 to 48 hours for VaR calculations | Real-time | Faster detection means earlier intervention in volatile markets |

| Fraud Detection Accuracy | 85 to 90% | 95 to 99% | Higher accuracy reduces false positives and financial losses |

| Explainability | High | Medium, improving with XAI tools | Regulators require defensible, interpretable model outputs |

| Model Adaptation | Quarterly updates | Continuous learning | Markets move faster than quarterly recalibration allows |

| Regulatory Compliance Cost | Lower | Higher, due to governance requirements | AI governance frameworks add audit, validation, and documentation overhead |

| Scalability | Limited by analyst capacity | Effectively unlimited | AI can monitor thousands of positions simultaneously without additional headcount |

This is not a replacement story. AI and traditional risk management have different strengths, and the institutions performing best in 2026 are the ones that have figured out how to use both. AI leads on speed and scale. Traditional risk management leads on regulatory familiarity, and governance.

Most institutions have stopped debating which to use and started figuring out how to combine them. Deloitte's 2026 survey puts a number on this: 78% of risk leaders now operate on a human-in-loop model, where AI handles the analysis, and the FRM professional handles the judgment.

Real Careers: What the Combination Looks Like in Practice

These are not composite profiles. They are real career trajectories from professionals who have already built the FRM and AI combination and moved up because of it.

HDFC Bank: AI Credit Model Validator

Three years into his career, an FRM Part II holder added Python to his toolkit and stepped into a model risk analyst role at 28 LPA. Seven years later, he was heading the model risk function at 52 LPA. The FRM gave him the foundation to understand what the models were doing.

Together, the two skills pointed his career in a direction that a credential alone rarely does. Python was not decorative. It was the variable that changed the outcome. In 2019, that pairing was uncommon. By 2026, banks were writing it into job descriptions.

PhonePe: Fraud Detection Lead

She started in a traditional operational risk role at 18 LPA. When she paired her FRM background with anomaly detection skills, she did not just get a raise. She moved into a different kind of job entirely. Five years later, she was leading fraud detection at 35 LPA, working at the intersection of risk judgment and machine learning that most finance professionals have not yet reached.

Global Capability Centre: GenAI Governance

Eight years in, an FRM professional who had quietly built GenAI governance expertise received something rare, an international transfer. The Singapore posting came with a SGD 180K package. It was not a reward for tenure. It was a reward for being one of the few people in the room who understood both the risk frameworks and the technology well enough to govern them together.

The pattern across all three is the same. FRM opened the door. AI fluency determined how far they walked through it.

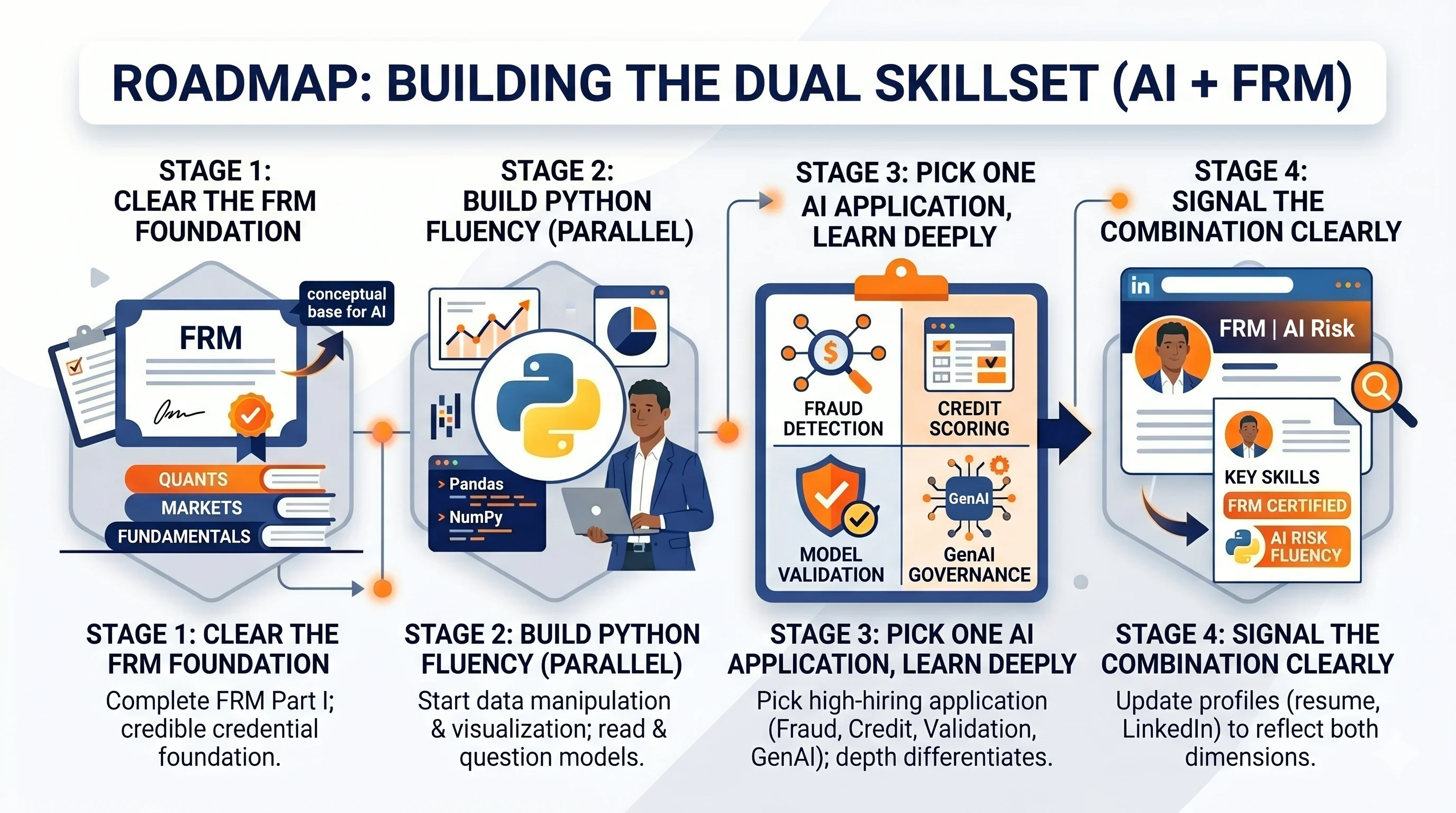

The Roadmap: Building the Combination

This is not about doing everything at once. It is about sequencing the right skills at the right stage.

Stage 1: Clear the FRM foundation

Stage 1: Clear the FRM foundation

Complete FRM Part I before adding any AI layer. The credential needs to be credible on its own first. Part I covers quantitative methods, financial markets, and risk fundamentals, all of which provide the conceptual base for understanding what AI models are actually doing inside financial systems.

Stage 2: Build Python fluency in parallel with FRM Part II

Start with data manipulation and visualization. Pandas, NumPy, and basic regression are enough to begin. The goal at this stage is not to become a data scientist. It is to become a risk professional who can read, run, and question a model.

Stage 3: Pick one AI application and learn deeply

Fraud detection, credit scoring, model validation, and GenAI governance are the four highest-hiring applications in 2026. Pick the one that aligns with your target role and build a project or certification around it. Generalism does not differentiate. Depth does.

Stage 4: Signal the combination clearly

Update your resume, LinkedIn, and any professional profiles to reflect both dimensions. Most hiring managers in this space are scanning for the pairing explicitly. If it is buried or absent, the profile does not surface.

Who This Is For

Not every finance professional needs this combination. But for a specific profile, it is close to a career-defining move.

This roadmap is built for:

- FRM candidates at Part I or Part II who want to understand where the credential leads in 2026 and how to maximize its value from the start

- Working risk professionals with 2 to 6 years of experience who feel their trajectory is plateauing and want a lever that is both credible and in demand

- Finance graduates targeting model risk, fraud, or credit roles who want a differentiator that goes beyond the standard credential stack

- Professionals in GCCs or MNC risk functions who have seen AI governance roles open up internally and want to position themselves deliberately

This is not for someone looking for a shortcut. The combination takes 18 to 24 months to build properly. What it offers in return is a career profile that is genuinely difficult to replicate and increasingly difficult for banks and fintechs to ignore.

Conclusion

AI is not the threat to careers that most people assume it is. The work it displaces was never the core of the job. The work it cannot do is exactly where the FRM sits.

Governance, judgment, accountability, and the ability to question a model that is producing confident but flawed outputs but these are not technical problems. They are professional ones. And they require a professional foundation to solve them.

The risk professionals who will matter most over the next decade are not defined by whether they use AI. They are defined by whether they understood it well enough to know where it stops and where their judgment begins.

Info:

Still confused about your FRM career? Fill out the form below and get a one-to-one consultation.

FAQs

Q: How has the FRM 2026 syllabus changed for AI topics?

A: Part I unchanged; Part II emphasizes AI model risk (validation, explainability via SHAP/LIME), governance (BCBS 350, ISO 23894), scenario generation in Market/Operational Risk. Liquidity Risk and Current Issues see major updates.

Q: Is FRM still relevant with AI taking over risk management?

A: Yes. AI handles execution (data cleaning, monitoring); FRM owns governance, validation, accountability. Regulators require human oversight; 78% of risk leaders use "human-in-loop" models.

Q: What AI skills do FRM candidates need in 2026?

A: Python/SQL (data interrogation), SHAP/LIME (explainability), model drift detection, BCBS 350 compliance. Combine with FRM for 25-40% salary premium in model risk roles.

Q: AI vs traditional risk management: Which is better for FRM careers?

A: Hybrid wins: AI excels in speed/scale (95-99% fraud accuracy); traditional owns explainability/regulatory defense. FRM pros govern the combo and its demand is up 35% in fintech.